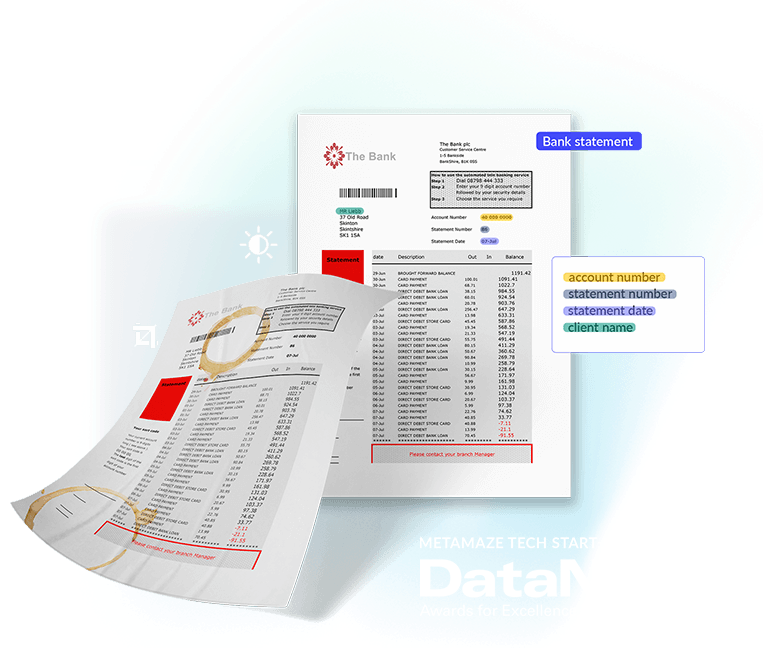

More performant shared models with Hydra

Introducing the new Hydra model architecture for Intelligent Document Processing. Discover more about it in this blog.

TL;DR:

- Train one model based on different sets of entities, with data from different projects

- No need to relabel existing training data when adding/removing entities

- Up to 20% higher Straight Through Processing Rate

- Up to 9% higher F1 score

- Up to 18% less false positives

- No actions needed, we take care of everything 🤗

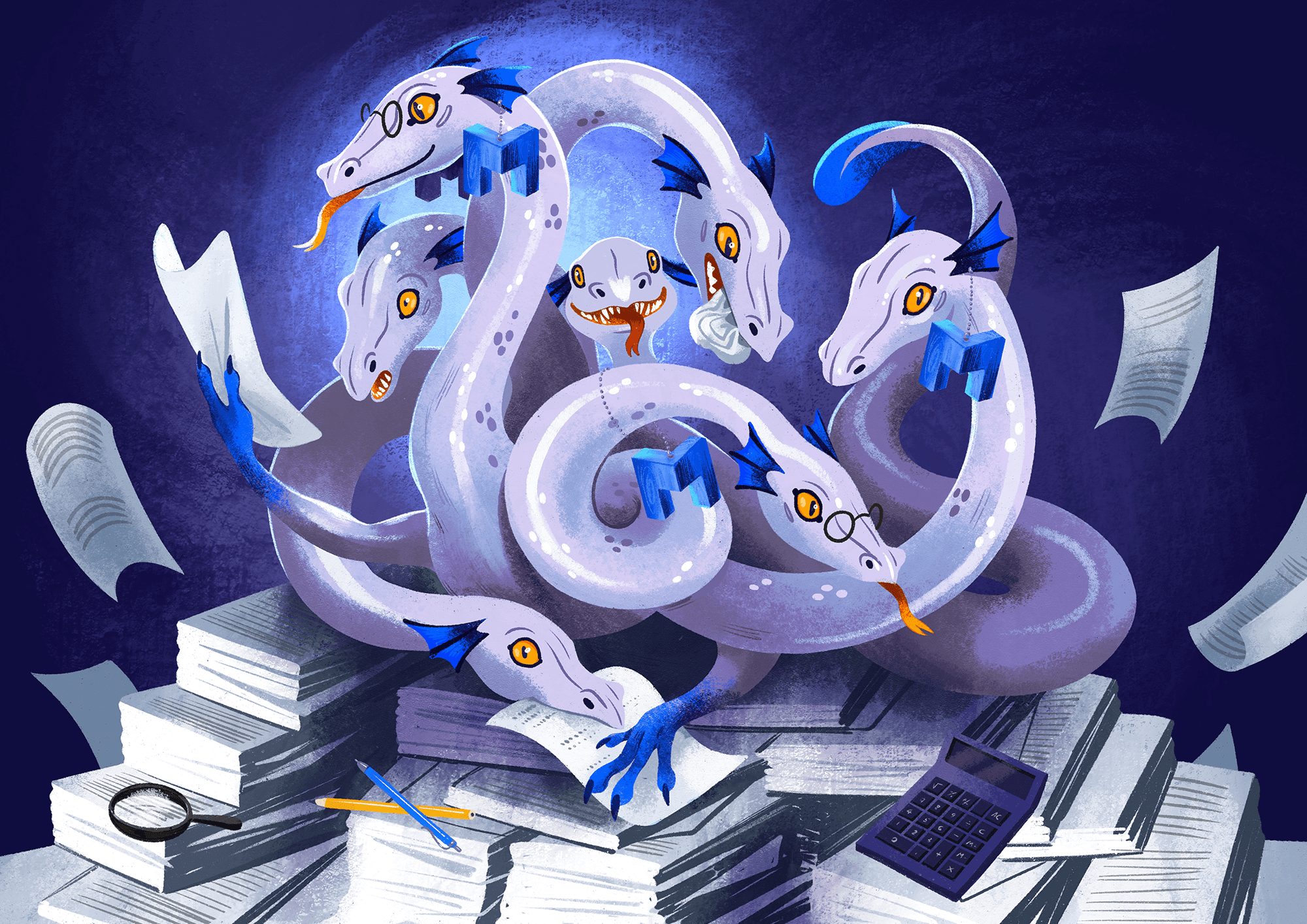

Take a look at this beautiful illustration that was developed for the launch of the new Hydra model architecture.

Introduction

An important feature of any Adaptive IDP platform is the possibility to train one single model on data from several sources or projects, even if those projects have different entity extraction requirements. Ideally, instead of annotating 500 documents and training a specific model for each project, you simply annotate 500 documents across all projects and train one single model that can be used in all projects, regardless of the differences in requirements. Overall, you will spend much less time annotating data since less data is needed. Additionally, the size of the training data set remains small and manageable, and you only have one model in the end, facilitating model maintenance.

Meet Hydra!

The Metamaze Machine Learning team has developed a new model architecture to improve the shared model experience: Hydra! This multi-headed beast is even better than its predecessor at dealing with partially annotated data, typical for model sharing across projects with different entity extraction requirements. If you need IBAN numbers in project A, but not in project B, but both projects use the same model, simply do not annotate the IBAN number in project B. The model will still learn to predict this entity, thanks to the training data in project A where it is annotated. If at a certain point, you need the IBAN number in project B after all, you will be able to get predictions for it, since the model knows this entity. No need to relabel all the training data from project B!

Why we launched this multi-headed beast called Hydra

With the previous model architecture, we dealt with partially annotated data by adding the data throughout several training steps and in a specific order, depending on what was annotated on it. Even though this technique generally gave satisfactory results in terms of accuracy, there was an increased risk of the model forgetting previously learned information, causing it not to perform equally well in all projects. The new Hydra model solves this issue thanks to its many heads: each head of Hydra is specialized in learning one single entity. All training data is added at once but only looked at by a certain head if the data contains information concerning the entity it specializes in. Adding all data at once reduces the risk of forgetting since all the information that must be learned is presented to the model throughout the whole training process, instead of only during a part of it.

Which improvements can you expect?

This depends on your training data.

If your training data is fully annotated, nothing will change. If however you use a shared document type, or enabled or disabled entities after already having labeled training data, your data is highly likely to be partially annotated. In this case you can expect up to 20% higher Straight Through Processing Rate, 9% higher F1 score and a decrease in False Discovery Rate of up to 18%. The expected impact depends on the type of entities in your data (simple vs. composite), their frequency, and as always, the quality of the training data.

What should you do to take advantage of these improvements? Nothing at all: you will automatically be transitioned to the Hydra model with the next full training you trigger. Enjoy watching your automated document flow becoming even more performant without doing a thing!

Curious to see our platform in action? Book your Metamaze demo asap.

Table of Contents

Request a Metamaze demo

Learn how Metamaze can help you automate any document and email in your organization. Book a demo with one of our experts and we’ll give you a quick tour of our product.