RELEASE NOTES

Release 2.1

Task module revamped

The quality of your training data determines how well the predictions are of your model. It is, in other words, of utmost importance to perform regular quality checks especially before (re)training a model. We have created a – tasks – module around this where annotated documents can be easily reviewed. In this release, we have improved the ease of review by adding hints (“misannotation hints”). These will suggest annotations that might be missing or redundant. Also we have worked on simplifying annotation tasks by adding predictions to unlabelled documents once a training is present (“model assisted labelling”). This will help the user with annotations. By not annotating from scratch, the user can save a significant amount of (annotation) time.

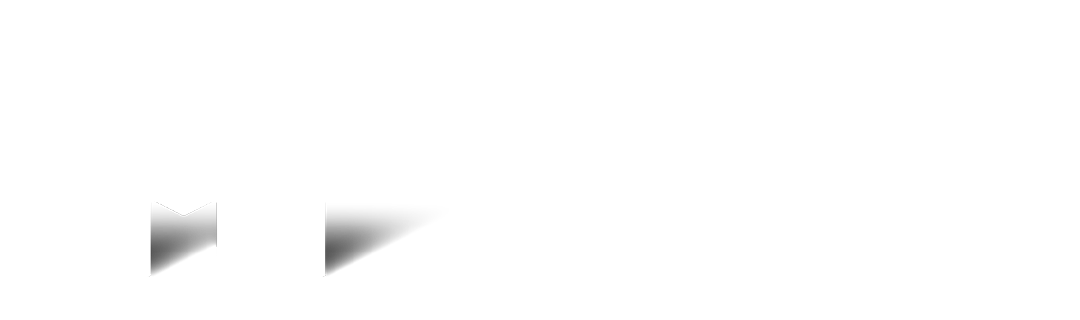

- – Redesign create task modal

- – Model assisted labelling is added with annotation tasks (in case of a trained model)

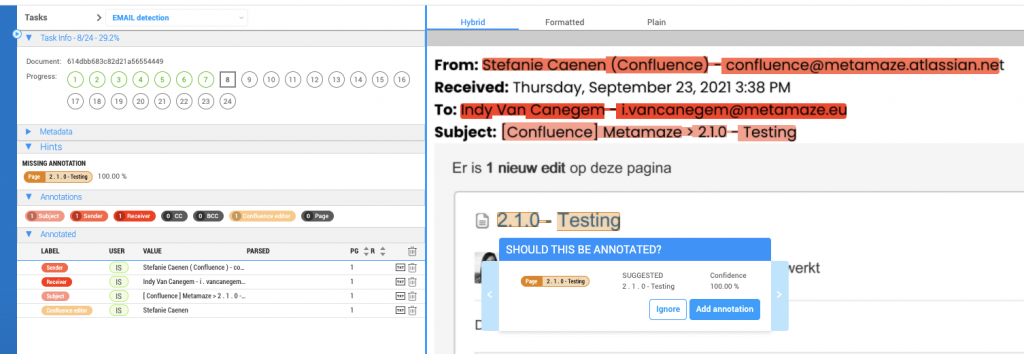

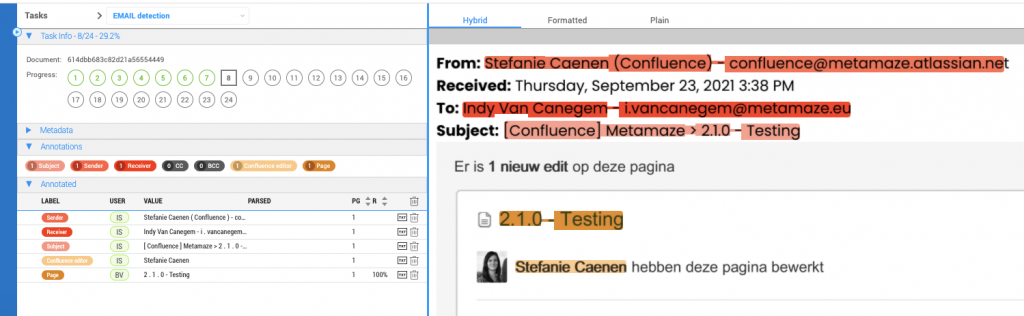

- – Misannotation hints are enabled with review tasks (in case of a trained model)

Example of a misannotation hint

Example when the hint was approved by the user (annotation updated)

Export to CSV

Besides JSON we support CSV as an output format for all data extracted, validated and enriched by Metamaze.

Other improvements

Use suggested tasks also when no training is present

We add suggested tasks when no training is present since before you train your first model, you will want to annotate uploaded documents so that afterwards you can review those annotated documents. What easier way is there than doing this using a task?

The review of a failed document is made more intuitive

When reviewing a failed document, you will be able to easily reuse the failed reason by confirming the failed status as well as unmarking the document as failed.