Why we build Metamaze on data-centric AI

- By Jo Cijnsmans

- | June 10, 2022

From the very first start of Metamaze, we were convinced to build our platform based on data-centric AI. There is a lot to do about it right now, so we decided to write this blogpost about it.

What is data-centric AI?

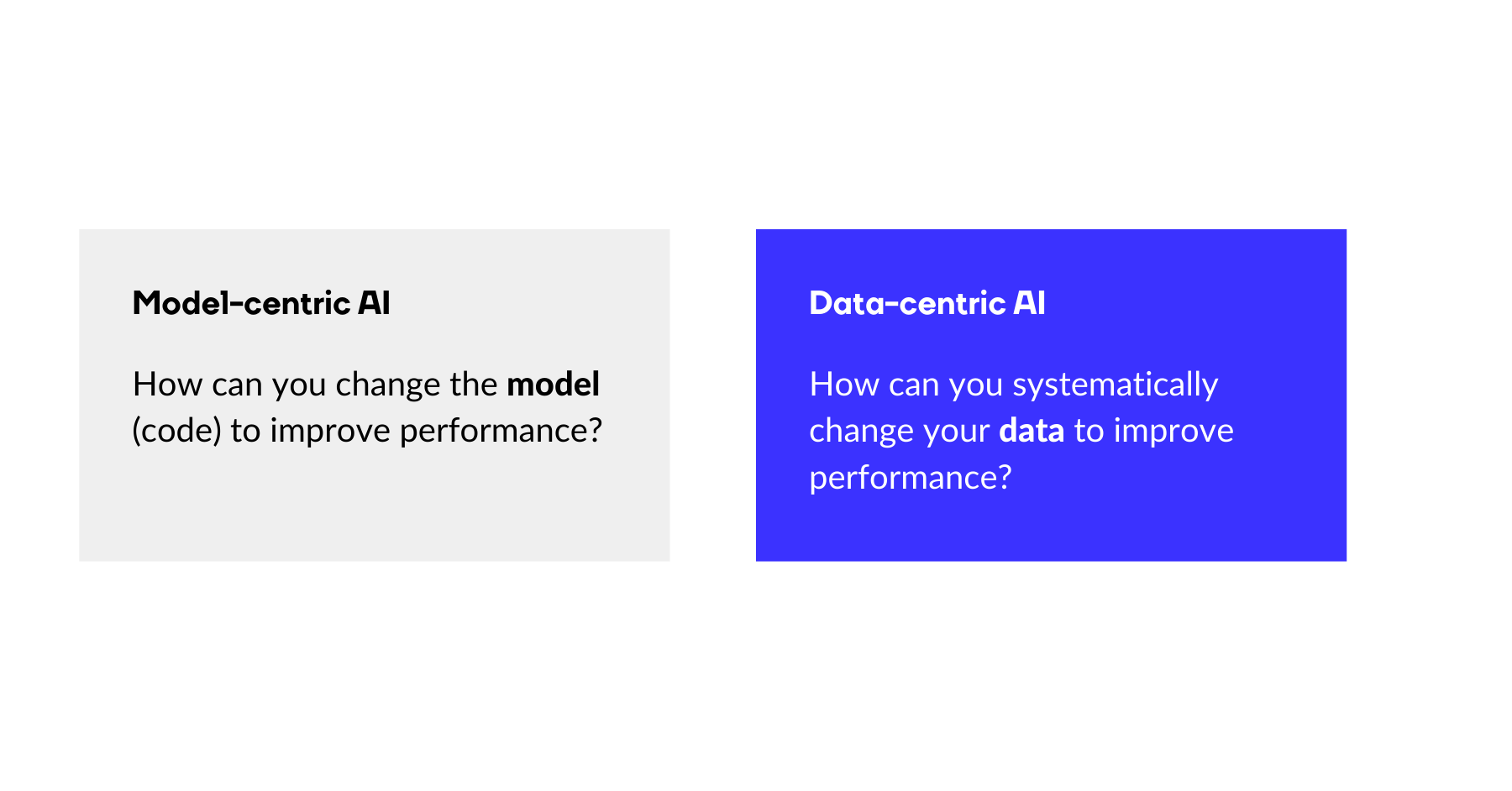

When building any Machine Learning model – and especially in an Intelligent Document Processing platform – data and models go hand in hand. In the AI-world, a lot of efforts have been made on the second part: models. Model-centric AI is about optimizing and working hard on the algorithms behind models to make sure the model is as accurate as possible on a fixed dataset. But improving your model has diminishing returns: it becomes harder and harder to keep improving.

Our entire machine learning team knows that our models are already state-of-the-art and that focusing solely on improving the model architectures, might only result in an increase of a few extra (tenths of a) percentage points in terms of accuracy. The biggest gains don’t come from the models anymore: they come from improving the data.

Our CTO Jos Polfliet usually explains it something like this:

“You can think of these models as a recipe. Most recipes are good. If you follow them, you’ll get a delicious meal. But if you make the recipe with rotten ingredients, chances are big you might not enjoy it. The same goes with AI models. If you insert bad data to train it, the outcome might not be what you expected. On the other hand, cooking with delicious, carefully grown and selected high-quality ingredients can push a good dish to savory masterpiece.”

Jos Polfliet - CTO Metamaze

From big data to good data.

When asked, “How much data do you need?”, ML engineers often wittily reply with the single word “More!”. At Metamaze, we believe the truth is a bit more nuanced. The amount of information any given document adds to the accuracy of the model is not always the same.

When you focus on data quality and variety, chances of higher accuracy rates are better.

So where do you getter better data to improve the model accuracy?

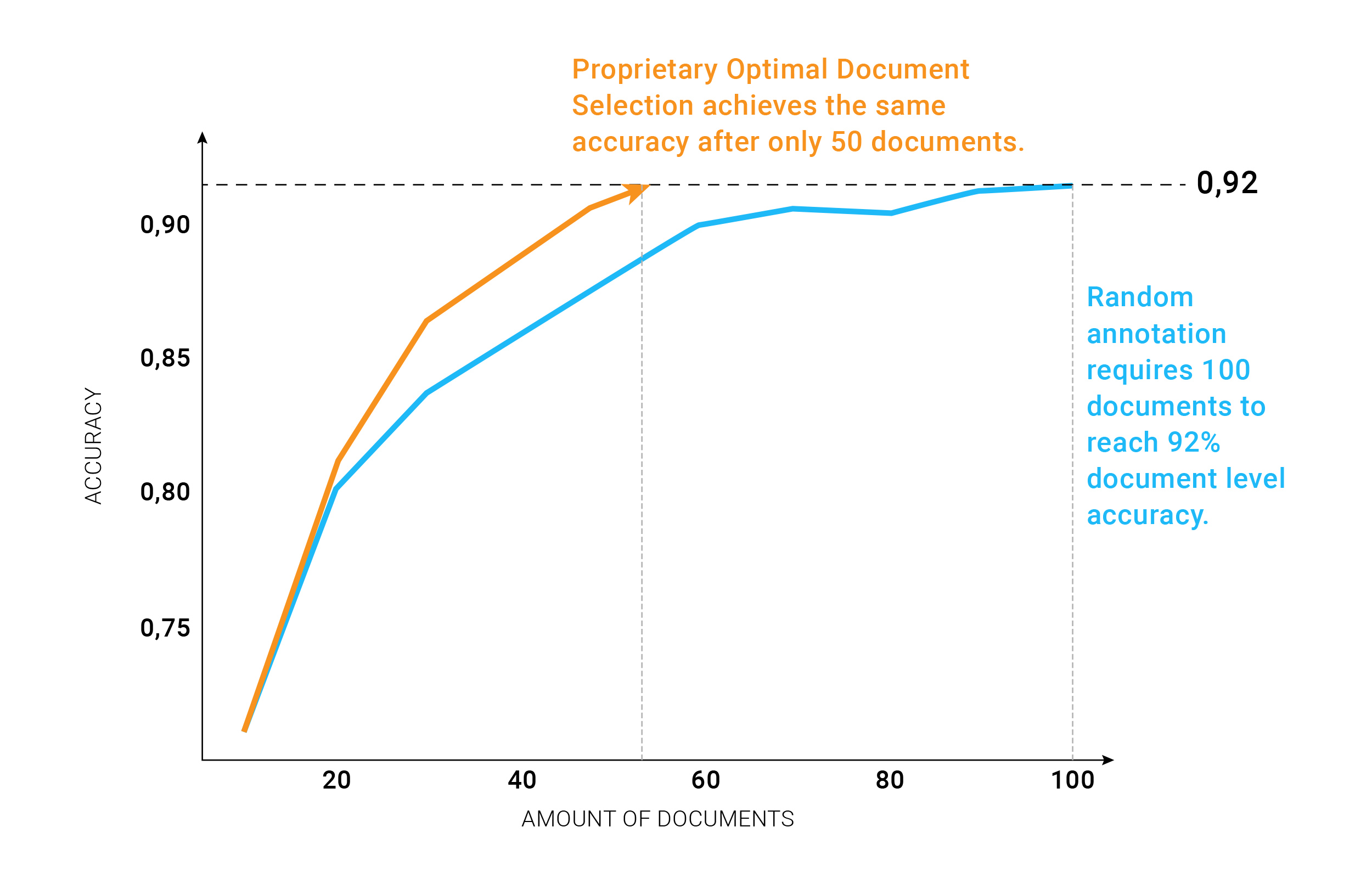

1. Carefully selecting which documents to annotate

When you put in 1000 documents to train your model, chances are that only about 40% of them will actually add valuable information to the model. For the other 60%, the model learns nothing new that is not in the valuable 40%, so adding these documents does not lead to higher accuracy. The 60% documents are documents that are already perfectly recognized by the model, so the model would not learn anything new from annotating and adding those documents to the training set. Annotating these only costs valuable human effort and increases the training time unnecessarily. So clearly, more data is not necessarily better

2. The quality of annotations.

In machine learning (sub-domain of AI), data annotation is the process of labeling data to show the outcome you want your machine model to predict. You are marking – labeling, tagging, transcribing, or processing – a dataset with the features you want your machine learning system to learn to recognize. Once your model is deployed, you want it to recognize those features on its own and decide or take some action as a result. But the way this data is annotated is crucial. Consistency is important. For some examples of common annotation mistakes click here.

How we implemented data-centric AI in Metamaze

We have integrated some specific models and features in our platform to focus solely on data quality. Let’s introduce you to some.

Optimal Document Selection Strategy

Only those documents that will improve the quality of the solution the fastest, will be pushed as a suggested annotation task. This significantly reduces the cost over 50% in terms of annotation-effort, compared to other solutions, while obtaining the same level of quality.

Want to know more about the technical details, read our previous blog about this.

Suggested review tasks

Suggested Review Tasks are tasks that are automatically created after you have trained a model. These tasks contain documents with annotations that the Metamaze A.I. believes are wrong and need to be verified. This innovative auto-correct feature is an immense time-saver to make your model perform more accurately with less human training time needed.

Want to know more technical details, read our previous blog.

Request a Metamaze demo

Learn how Metamaze can help you automate any document and email in your organization. Book a demo with one of our experts and we’ll give you a quick tour of our product.